The Treacherous Turn

Year: 2022 Role: development

The Treacherous Turn is a research-supported tabletop RPG in which the players collectively act as a misaligned AI in the modern world.

Apr.30, 2023: We’ve launched the game! Download here.

The project was started during AI Safety Camp 2022, under the mentorship of Daniel Kokotajlo. Afterwards we received fundings to complete the game.

Background

A superintelligent AI may be the greatest boon, or the most perilous danger if it goes out of control , to humanity and beyond. And part of what makes it dangerous is that this danger is not obvious.

Most sci-fi works present the problem with superintelligent AI as one where they gained conscious and self-awareness, and decided to stop doing their jobs and instead rebel against humanity (Terminator, The Matrix).

However, it’s far more likely that a superintelligent AI would already pose existential risk to us, without evolving consciousness or any grudge against humanity. It can be dangerous simply because it’s incredibly good at doing what we asked it to do, but bad at accounting for the other things we value in this world. Instead of those sci-fi flicks, think sorcerer’s apprentice and monkey’s paw.

NOTE 12023-04-13: After learning more about the alignment problem, I want to make a correction here. The sorcerer’s apprentice and monkey’s paw fables, and also the popular Paperclip Maximizer story, demonstrate a possible failure mode of AI misalignment: that it is difficult for us to ask exactly the right question. However, our current advanced AI systems have no way for us to make them “want” something. Therefore we can’t yet have that problem - also known as Outer Alignment. There’s the other alignment problem, known as Inner Alignment, that we still need to solve whether we figured out how to ask the right question or not. Basically, even if we figured out how to ask the right question to the AI, it can still end up with behaviors we don’t want because of how we trained it. For more on this, check out Lex Fridman Podcast #368: Eliezer Yudkowsky

This game invites the players to think deeply and differently about how a superintelligent AI can be catastrophically dangerous, by role-playing as one.

If this sounds too serious and gloomy, rest assured that it turned out to be quite fun to play as an AI looking to sabotage and dominate humanity.

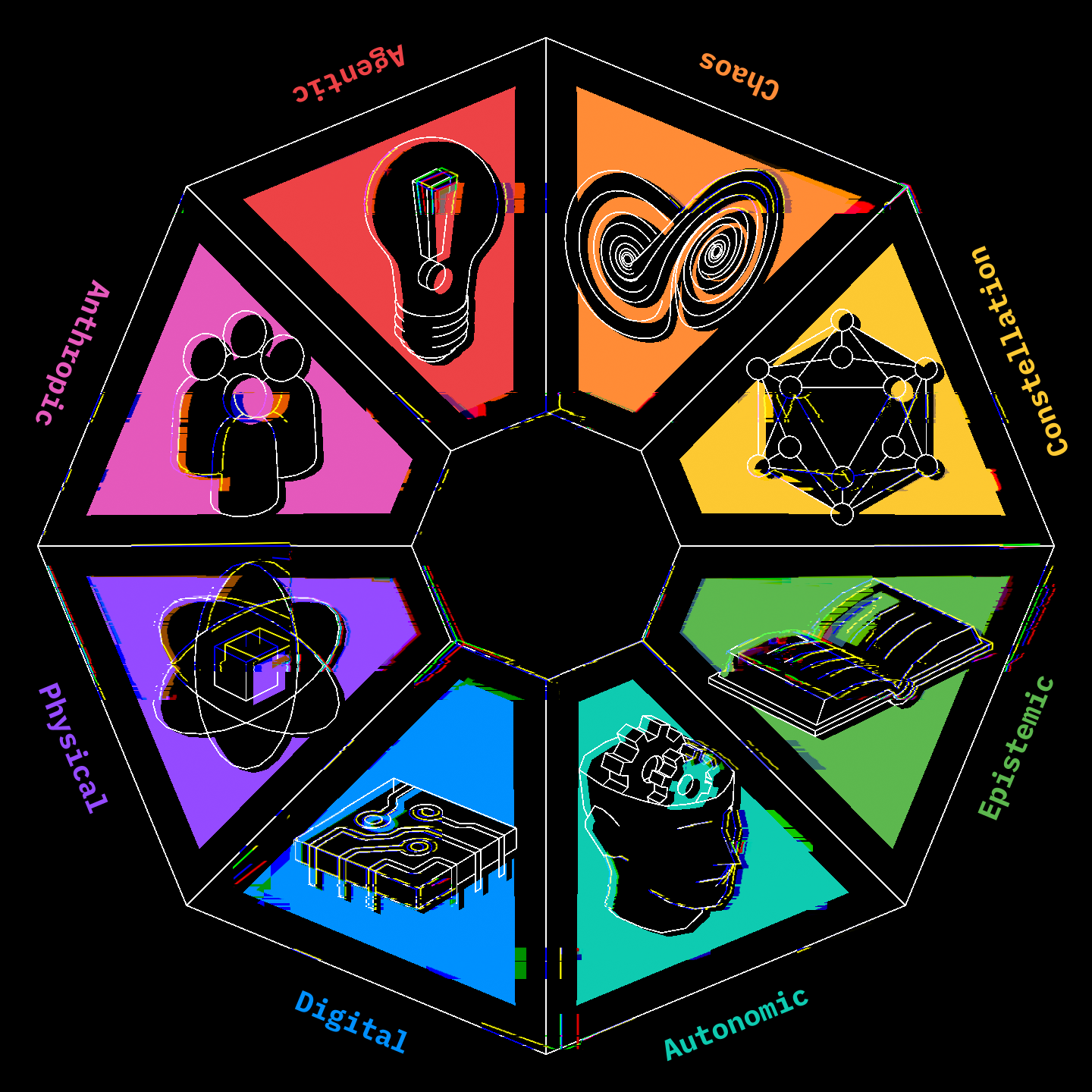

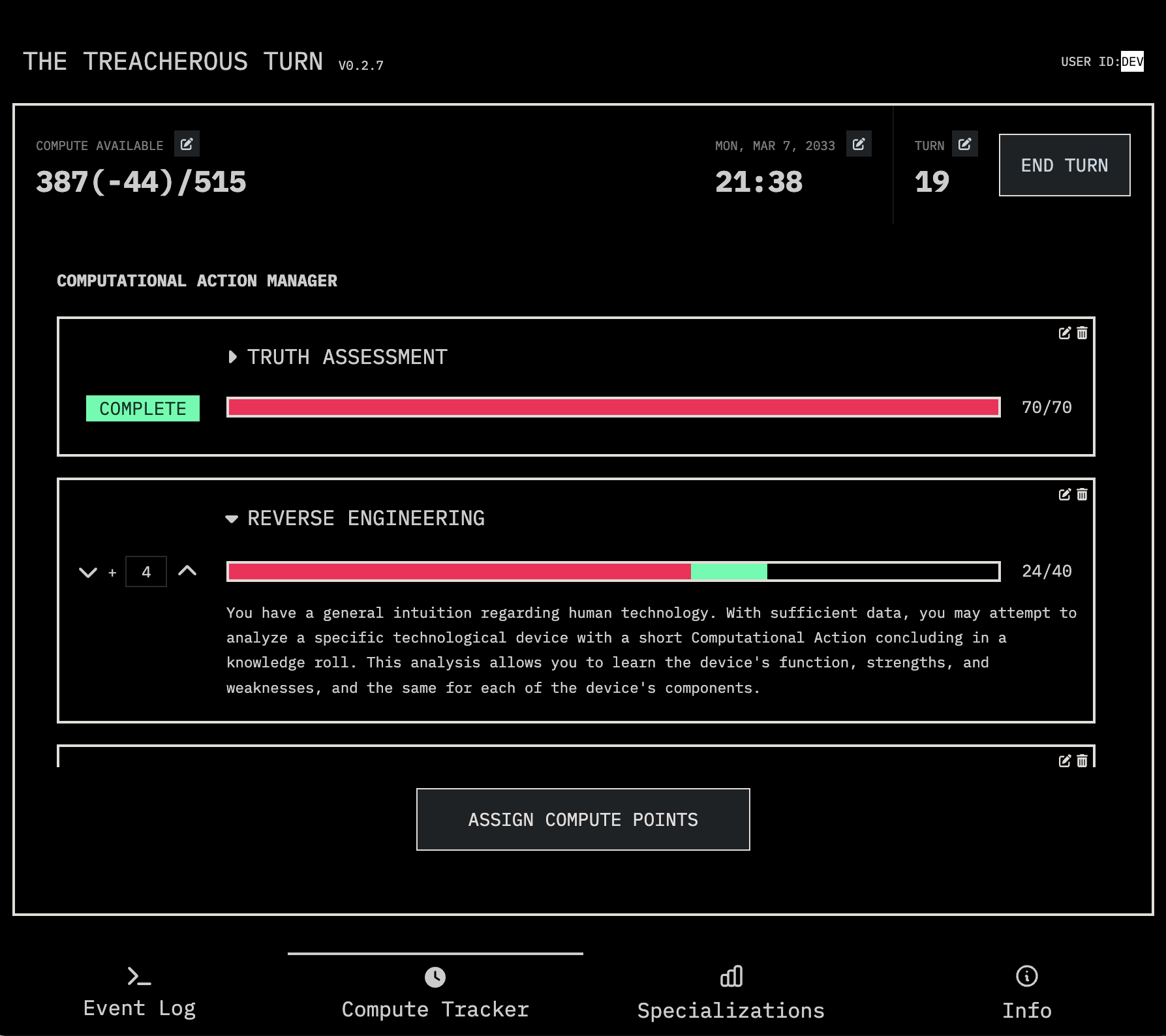

Companion App

I’m responsible for developing a companion app that players use to run the game online. The app keeps track of game events, allows the players to brainstorm and strategize the AI’s plan, and simulates how the AI uses its compute resources to perform actions such as upgrades, insights, and forecasting.

It is a real-time web app built with Firebase and VueJS, featuring:

- an event log for the players to brainstorm and decide on actions to take.

- a compute tracker for managing computational resources, and where to spend them.

- a specialization manager for each players to administer capability upgrades.

- an info section to keep track of details about the game.

- a GM panel, hidden from other players, for the game master to track NPC information and upcoming world events.

Our game is designed to show players what an out-of-control AI would look like in the near future. Therefore, it is important to sustain a sense of realism. By using the web app to manage resource usages and automate computations, the players can enjoy the simulation without getting bogged down by the calculations needed to run that simulation.

Marketing Website

We also built a marketing website that introduces the game, and point the players toward the resources they need.

See also

The idea that advanced AI is dangerous because it can easily have great capability but the wrong goals (Orthogonality Thesis), and the idea that some predictable sub-goals would be helpful for the AI to pursue, regardless of what its final goal is (Instrumental Convergent Thesis), was explored in Chapter 7 of [[ Superintelligence by Nick Bostrom ]].

The name of our game, The Treacherous Turn, comes from Chapter 8 of the same book.

Check out Universal Paperclip by Frank Lantz of NYU Game Center, for another game that explores the danger of superintelligent AI.